Joerg Moellenkamp‘s post explaining CacheFS has me excited:

Long ago, admins didn’t want to manage dozens of operating system installations. Instead of this they wanted to store all this data on a central fileserver (you know, the network is the computer). Thus netbooting Solaris and SunOS was invented. But there was a problem: All the users started to work at 9 o’clock. They switched on their workstations and the load on the fileserver and the network got higher and higher. Thus the idea of CacheFS [as a way of using the speed of local disk and the convenience of central management] was born.

Remove the corporate office and the uncaring sysadmins, and CacheFS might be exactly what I’m looking for to elastically expand the capacity of my laptop’s internal storage. This isn’t some newfangled technology, Sun developed it in the early 90s and it’s been available for Linux since 2003 (try it in Gentoo). And most importantly, it’s “designed to be as transparent as possible to a user of the system. Applications should just be able to use NFS files as normal, without any knowledge of there being a cache.”

The local cache isn’t expected to be a complete mirror of the remote filesystem, just the recently opened files. So the capacity of your local disk is limited only by your willingness to wait for files to be retrieved from the network. The biggest problem is figuring out what happens when the network isn’t available. CacheFS doesn’t appear to solve that and would likely fail if the network dropped.

I know nothing about filesystem development, but this challenge is interesting enough to make me consider jumping in. The availability of a partial solution helps too.

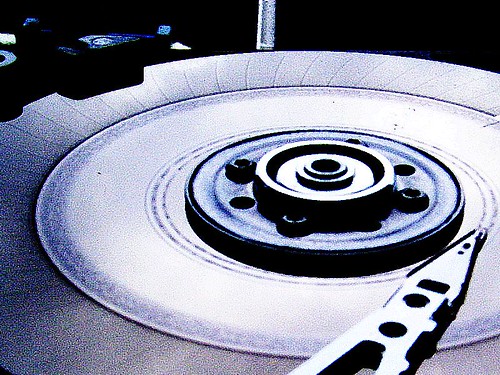

Ups to hindesite for the sweet drive photo. Too bad about what happened to it, though.